Excel can be a powerful tool for keeping track of scraped data.

You can perform simple tasks like filtering, sorting, using pivot tables, and creating charts based on your data.

But… How can we efficiently extract data from the web and export it to Excel for further processing?

In this tutorial, we will walk you through how to use Hexomatic to scrape data from any website and export it to an Excel spreadsheet.

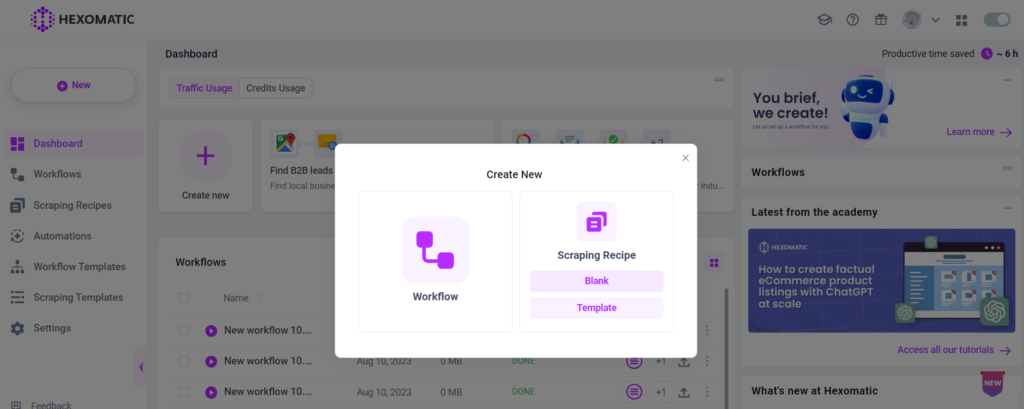

Step 1: Create a new scraping recipe

To get started, create a blank scraping recipe.

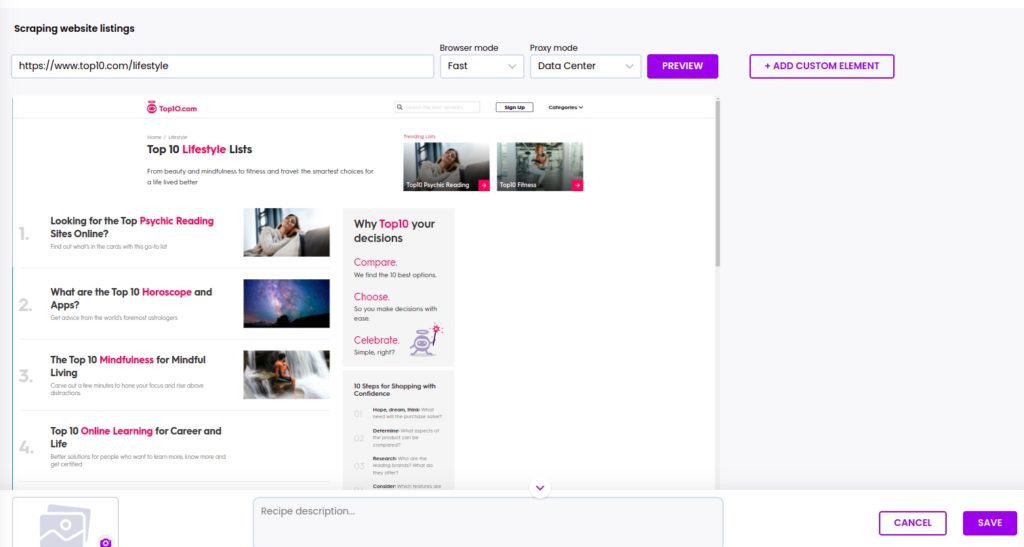

Step 2: Add the web page URL

Add the web page URL and click Preview.

Step 3: Choose the elements to scrape

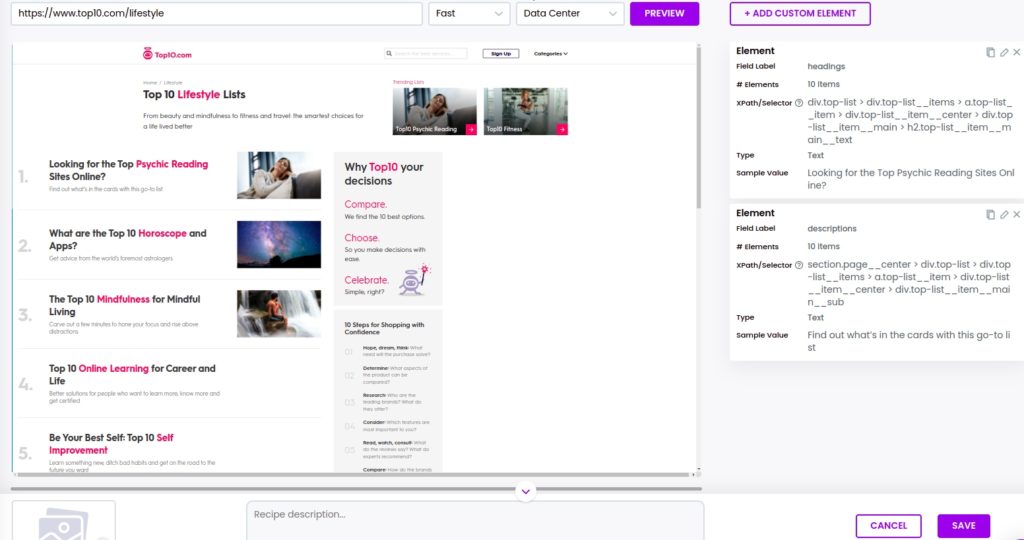

Now, you can select all the elements that you want to scrape.

In this case, we are going to scrape headings and descriptions of articles in the Lifestyle category.

To select all the existing elements of the same category, you should click on the element, then choose the select all option.

Then, click Save.

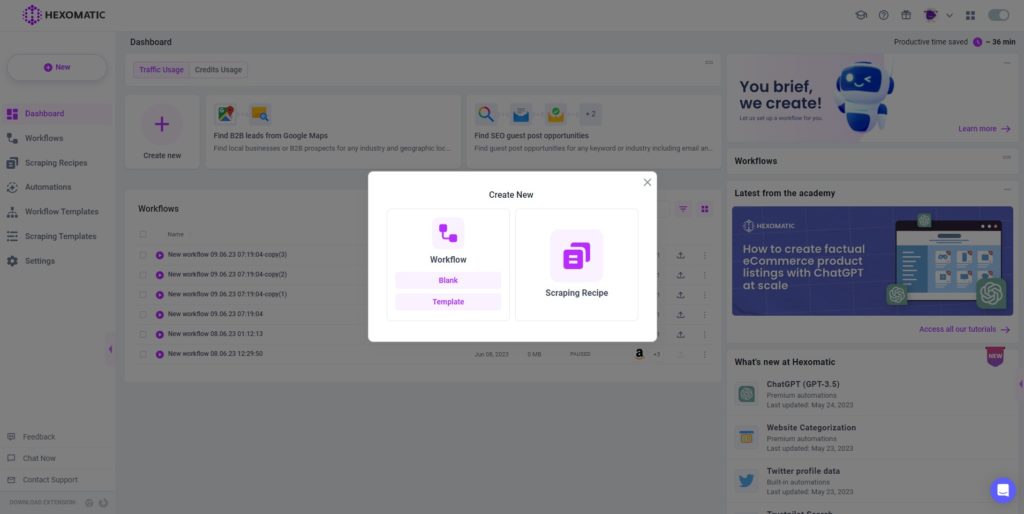

Step 4: Create a new workflow

To run the scraping recipe, you need to create a blank workflow and add the scraping recipe to it.

Create a new workflow, then choose Data automation as your starting point.

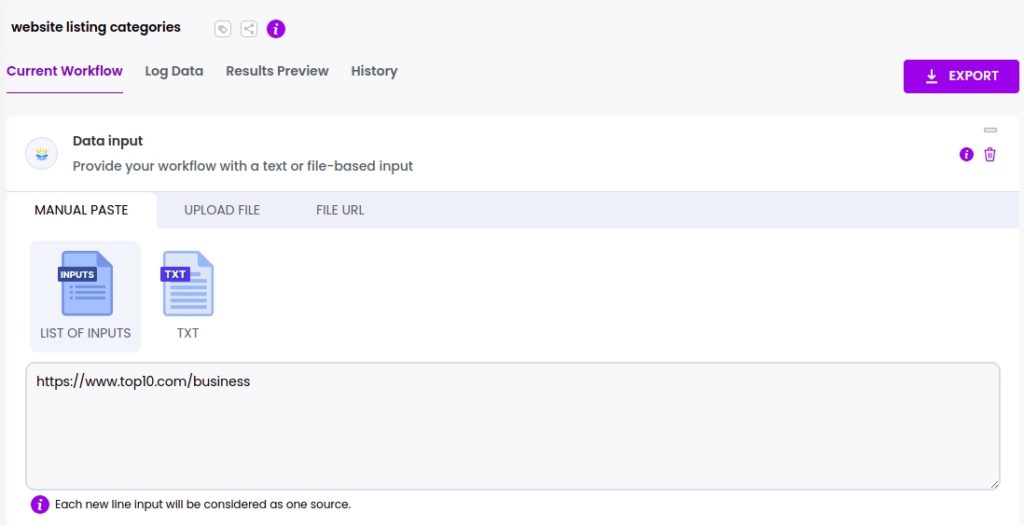

Step 5: Add the web page URLs

Next, add the copied URLs using the Manual paste/list of inputs option.

Please, note that the pages need to share the same HTML structure.

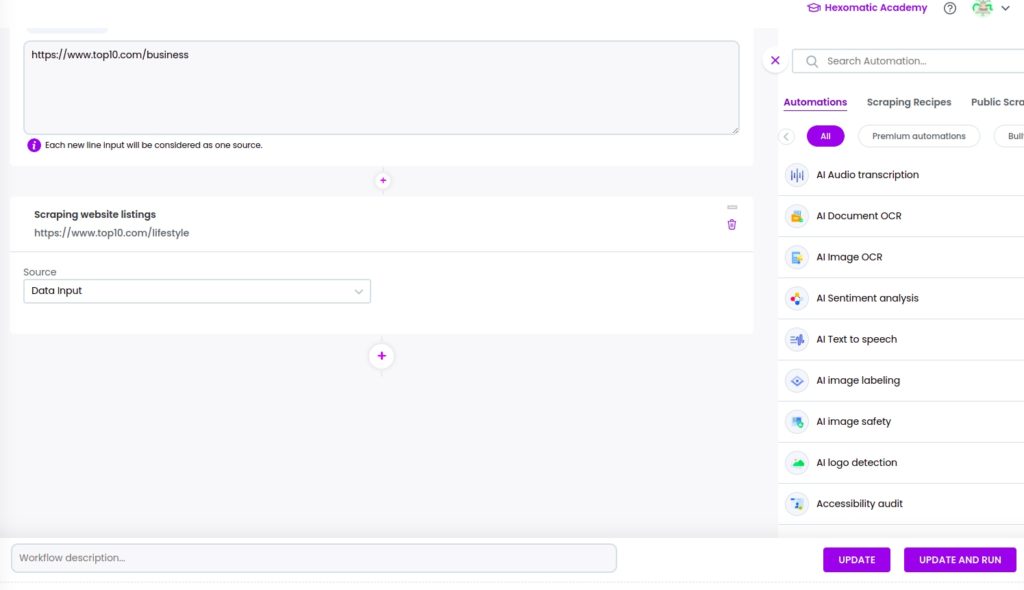

Step 6: Add your scraping recipe

To automatically scrape data from the inerted web pages, you need to add your previously created scraping recipe to the workflow, selecting data input as the source.

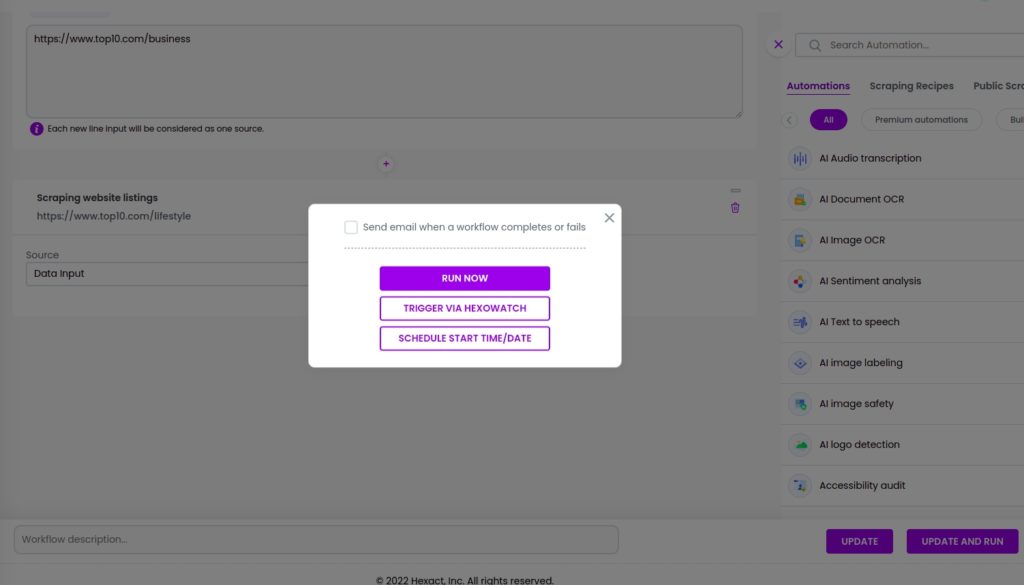

Step 7: Run the workflow

Run your workflow to get the results.

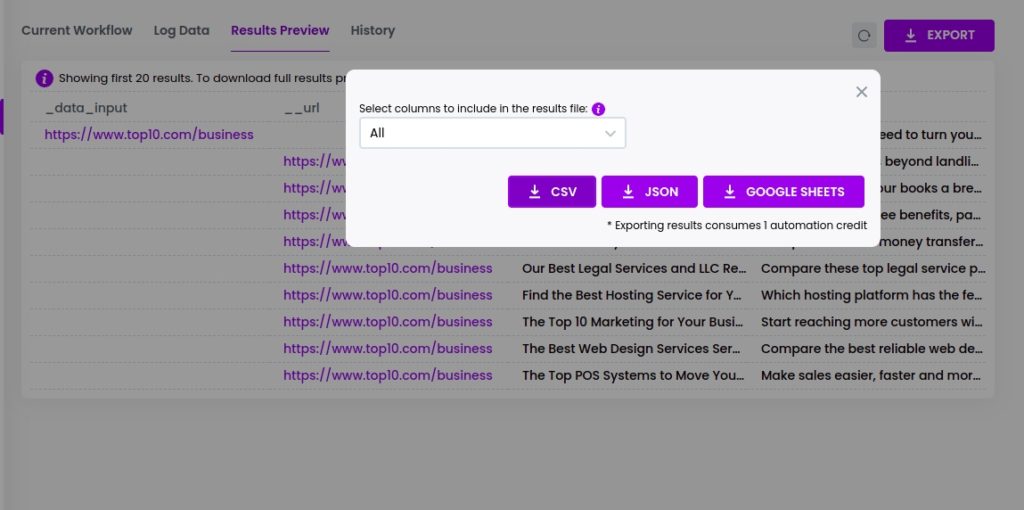

Step 8: View and save the results

Once the workflow has finished running, you can view the results and export them to CSV or Google sheets.

In this case, we will export the results to a CSV file.

Automate & scale time-consuming tasks like never before

Marketing Specialist | Content Writer

Experienced in SaaS content writing, helps customers to automate time-consuming tasks and solve complex scraping cases with step-by-step tutorials and in depth-articles.

Follow me on Linkedin for more SaaS content