In this short tutorial, we are going to run through how automations work and how you can use automations inside your workflows to perform work actions.

Whilst scraping recipes enable you to design your own bot to capture data from any website automations on the other hand are pre-built and enable you to perform specific tasks or leverage 3rd party services inside your workflow.

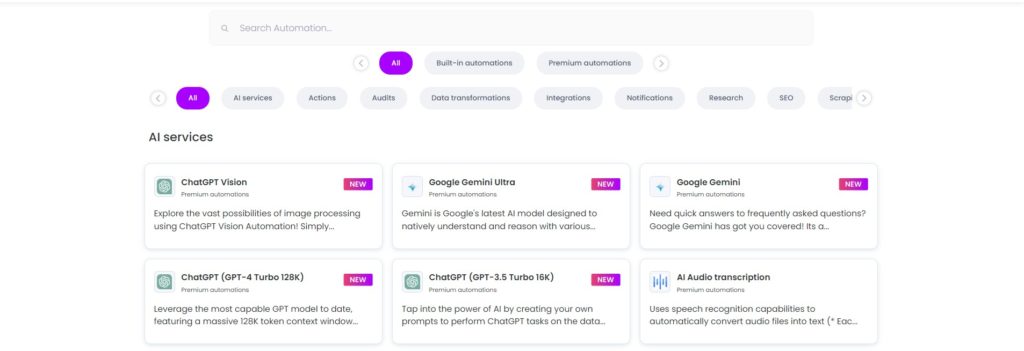

We have 100+ automations available:

To make it easier for you to find the right automation tools, we’ve organized them into the following categories:

- – AI services

- – Actions

- – Audits

- – Data transformations

- – Integrations

- – Notifications

- – Research

- – SEO

- – Scraping

- – Translation

- – Validation

Here are some of the most popular automations for you to consider:

Data input – enables you to provide a list of URLs or text to scraping recipes or automations. This automation usually becomes the starting point for any workflow our users create on Hexomatic.

For lead generation and research:

- Google search – enables you to extract search results from Google as a service

- Email scraper – enables you to extract email addresses from any URL

- Social media links scraper – enables you to extract social media profiles from any URL

- Traffic analytics – enables you to get traffic estimates for any domain

- WHOIS – enables you to get WHOIS contact and domain expiry information on any domain

- Tech stack – enables you to get a list of technologies and 3rd party libraries used by any page

For SEO:

- Meta tags – enables you to extract all the meta tags used on any page

- SEO backlink explorer- allows you to find existing backlinks for an entire website or specific webpage

For AI services:

- ChatGPT Vision- Enables you to explore the vast possibilities of image processing using ChatGPT Vision Automation!

- Google Gemini Ultra- Gemini is Google’s latest AI model designed to natively understand and reason with various types of text data.

- ChatGPT (GPT-4 Turbo 128K)- Use the most capable GPT model to date, featuring a massive 128K token context window to perform a wide range of human tasks at scale.

- AI Audio transcription- You can transcribe hours of audio in minutes from a wide range of languages.

- AI Text to speech- Enables text to be converted into speech sounds imitative of the human voice via Google Text-to-Speech.

For making changes to the data you collect:

- Number transformation – enables you to change number formatting in your data

- Date transformation – enables you to change date formats in your data

- Text transformation – enables you to change text formatting in your data

- Measurement converter – enables you to change one unit to another in your data

We also have notification automations including:

- Telegram – send a notification via Telegram

- Slack – send a notification via Slack

- Discord – send a notification via Discord

You can find out more about our 100+ automations in the “Automations” section of Hexomatic.

How to use automations?

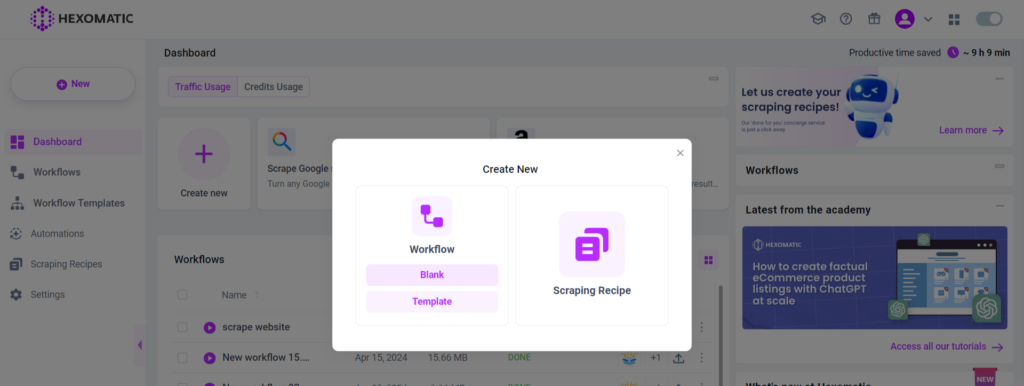

To use any automation or scraping recipe you need to first create a new workflow. Then you will be able to use the automations you need.

Let’s run through a quick example using Google search automation to find some leads and the email + social media link scrapers to find contact information for each website.

1/ To get started create a new blank workflow from your dashboard:

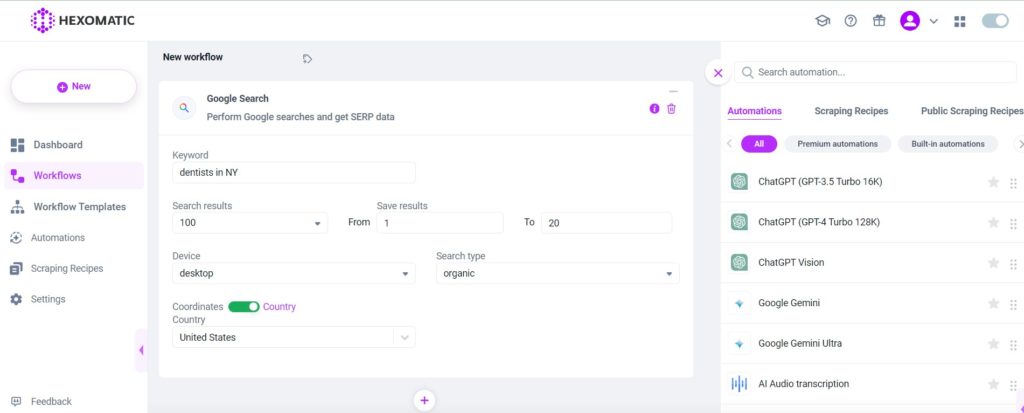

2/ Then choose the Google search automation, specifying your search query and the geographic location you are targeting. In this example, let’s look for dentists in NY.

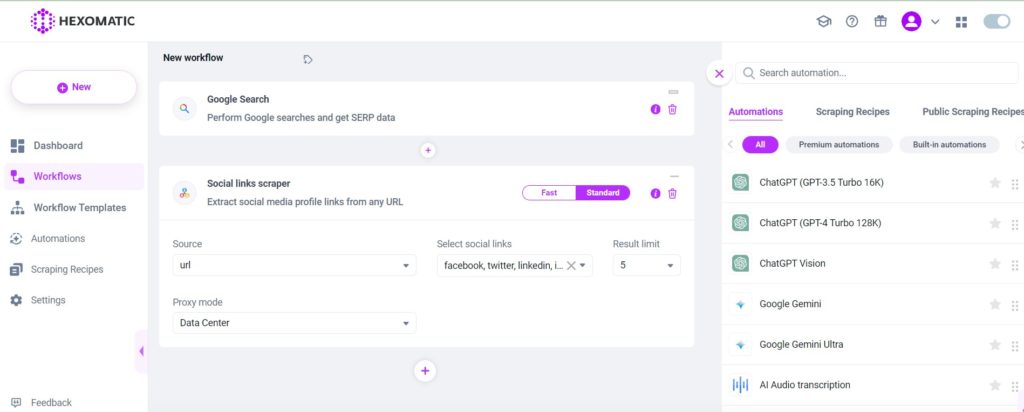

3/ Next add the social links scraper and use the URL field from the Google search automation as the source. This will run the social links scraper on every URL listed in the search results.

You can then specify which social profile links you are interested in, for example, Facebook, Twitter, LinkedIn, etc…

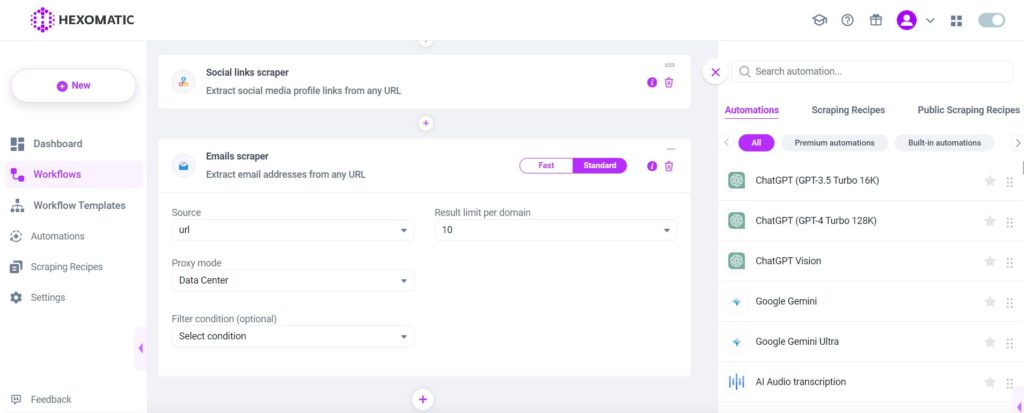

4/ Next add the email scraper and use the URL field from the Google search automation as the source. This will look for email addresses listed on every URL found in the search results.

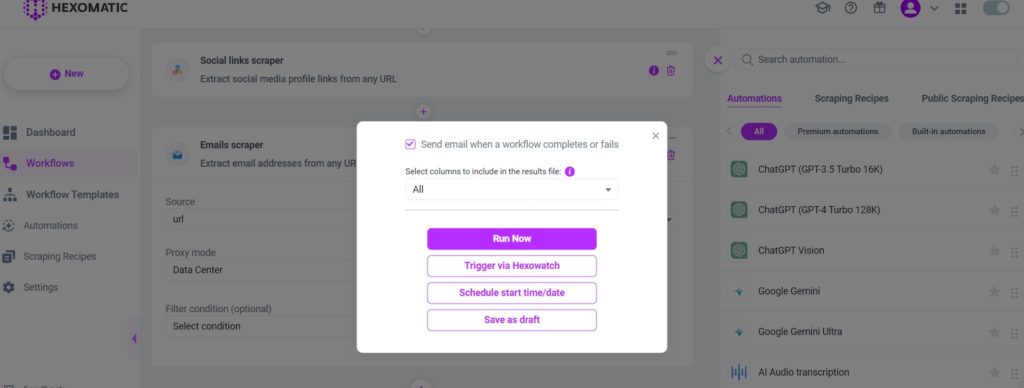

5/ Name your automation and click Continue.

You can now choose to run the task now or you can schedule it to run in the future.

When the workflow has been completed you will be able to download the results in a .csv file.

Automate & scale time-consuming tasks like never before

Content Writer | Marketing Specialist

Experienced in writing SaaS and marketing content, helps customers to easily perform web scrapings, automate time-consuming tasks and be informed about latest tech trends with step-by-step tutorials and insider articles.

Follow me on Linkedin